The 2-Sigma Problem: The 1:1 Tutor

The Promised Land for education has been 1:1 tutoring since Aristotle tutored the young prince Alexander the Great. The only technology in history capable of delivering it arrived. VCs preached ad nauseam about that utopian application while they funded literally anything else instead. And still, no one’s building it to its full potential.

In 1984, Benjamin Bloom found students taught with one-on-one tutoring performed two standard deviations better than those in traditional classrooms. The 50th percentile tutored student outperformed 98% of peers taught ordinarily.

While the study design had issues, rigorous modern studies put tutoring effects at 0.35–0.50 standard deviations. Still, that means having even an average tutor moves you from 50th to the 67th percentile!! Having a world class tutor would boost you even further.

That’s by far the most effective education remedy. And also the least scalable.

Over the last 10+ years, several teams have built pre-LLM deterministic systems that route students to questions based on their answers to prior questions effectively like a complex flowchart.

These systems also seek to utilize the few other well-validated learning techniques like spaced repetition, learning to mastery, and immediate feedback.

They appear relatively effective. ASSISTments demonstrated gold-standard evidence with 0.18-0.29 SD effect sizes in RCTs with 2,800 students. DARPA spent $100M over seven years to build a digital tutor to train IT professionals for the Navy with reported effect sizes of 1-3. Synthesis and Math Academy believe they’ll reach multiple SD effect sizes for K-12 math, though some beg to differ.

The Product is Stuck 20 Years in the Past

Regardless of these systems’ actual real-world efficacy, we believe they’ll struggle to gain adoption because the richness of the learning experience remains limited only to the dynamic question routing algorithm.

The user experience feels static, lifeless, procedural. Text on a screen. Maybe a still image. And a multiple choice box waiting at the bottom.

The vast majority of people don’t enjoy learning this way and won’t learn optimally even if they do push onwards.

The pedagogical engine may be doing something clever underneath and the content may be quality, but the surface the student actually touches is indistinguishable from a textbook that grades itself.

They feel as close to a digitized version of a worldclass in person tutor as licking a picture of an ice cream on your laptop.

The Elephant in the Room That No One is Talking About

Modern generative AI is the only technology ever that may actually be able to deliver this utopian vision of world class one-on-one tutoring with a rich user experience at nominal cost. And, it may even be able to fix both the product issues and the economics.

Yet no one is building towards that future. In fact, nearly every edtech company we spoke with emphatically disavowed its usefulness, saying that it may be able to one-shot a lesson with 80% of the desired quality but that remaining 20% makes it useless. Even after we prompted them that in a few years things may be different, they kept up the meme of saying my profession will be the last one standing.

Exponentials are hard for even math teachers to internalize.

Our core thesis is that engagement and substantive learning no longer have to be diametrically opposed.

When the teaching is effective, learning can be among the most rewarding, confidence-boosting, and even enjoyable activities. It’s the tediousness of stumbling around aimlessly and not having the prior foundational knowledge to even have a chance that makes it intolerable. The best teachers in the world quickly close the gap between effort and the rewarding aha moment while making you feel empowered and heard.

A superhuman teacher will soon be buildable. Studies have suggested that LLMs with scaffolds are already halfway decent 1:1 tutors. Current accuracy and hallucinations problems can already be contained to ~1% with proper structuring. Context length remains an engineering challenge as the quality of responses and coherence degrades as the conversation goes on, similar to compaction in Claude Code sessions. However, within two years, they’ll almost certainly be capable of world class on-the-fly curriculum generation.

Moreover, LLMs are already showing glimmers of reliably generating rich, personalized multimodal content. Over the last year, they’ve been able to generate a custom podcast based on your imputed content. Now, they they can one-shot rich dynamic math visualization videos:

Claude can make blue1brown animations in minutes.

— Lior Alexander (@LiorOnAI) January 27, 2026

Education is about to explode. pic.twitter.com/3a1dfqAlfO

Introducing Cinematic Video Overviews, the next evolution of the NotebookLM Studio. Unlike standard templates, these are powered by a novel combination of our most advanced models to create bespoke, immersive videos from your sources.

— NotebookLM (@NotebookLM) March 4, 2026

Rolling out now for Ultra users in English! pic.twitter.com/eHR1YqpxRN

Soon these multimodal experiences will be interactive. You could interrupt them to ask questions even by voice and get thoughtful responses back. Instruction shifts to dialogue. The product shifts from passive consumption to fractals of active experiential learning.

These multimodal capabilities will only get richer, more sophisticated, and more immersive as companies like Compound portfolio company Runway push towards world models. History classes where you’re physically immersed within the moment in history and can navigate the scene and ask the person you’re studying questions. Video games where you learn a core lesson via a GTA quality game.

This is what learning looks like in spatial computing 👀 pic.twitter.com/wknxZjEJL1

— Adam KP (@AdamKPx) March 2, 2026

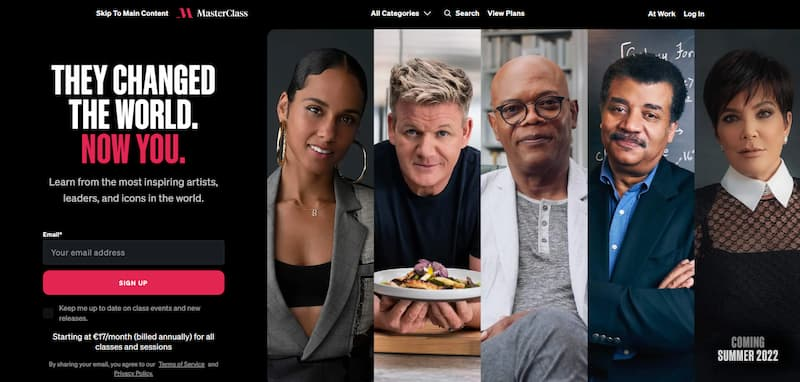

Or imagine virtual avatars acting as guiding instructors. It could enable a financially viable version of Masterclass where celebrities don’t need to spend days of their exorbitantly valuable time on set recording the course, only license their likeness to a good cause.

Another way to make the learning more engaging without sacrificing substance is personalization. A kid is currently obsessed with baseball, so his favorite superstar takes him through the lesson and all the word problems are based around baseball. The system should pick up that you’re a visual learner that responds best to a certain type of question.

Learning Meta Learning

The system should be able to autonomously learn pedagogy or the algorithm for how people in general and a specific person in particular learn best. So it should be able to efficiently run experiments, learn from those low data environments, and then change its own behavior accordingly.

It could in principle use all the data from the user’s interaction from all their answers to their time-to-response, the confidence in their vocal intonations, their webcam showing their facial expression and what pieces of information their eyes are focusing on while thinking, etc.

The latter would only of course be possible if people got comfortable with the security of on-device AI and / or advanced cryptographic privacy preserving tech like zkML.

The technically ambitious version of this would be to build a multimodal transformer that takes all these inputs and outputs a real-time probability distribution over cognitive states. Not just engaged/disengaged but fine-grained states like "executing a memorized procedure without understanding" vs "experiencing productive struggle."

Maybe the best solution to training a robust system is using frontier LLMs fine-tuned on real student interaction data to stimulate millions of student agent personas, then train the learning algorithm in simulation, and then close the sim-to-real validation gap with small batches of students.

Sci-Fi Manifestations of Bloom’s Vision

How should one apply such a learning engine? We’re thrilled about the first-order applications of the ideas above like STEM tutoring, SAT prep, or career development.

But there’s so many extensions that are under-discussed. Below are some of the eclectic ideas we’ve toyed around with.

Browser extension that helps you do your job better

A system designed for post-grad professionals that passively observes your computer use, then gives you the choice of either learning how to do your role better or automating the manual workflows you don’t want to do. It could identify concepts to refresh your memory on, identify skill gaps to close, etc. and then generate learning lessons for the most important ones. For the rest it figures out how to use Claude Code to automate what you dislike about your job.

Maybe it even actively finds content that you haven't seen before, like a search engine or content feed that finds not just stuff relevant to you but stuff relevant to you that you don't already know.

Confidence-first skills platform

Speak’s main product isn’t language learning. It’s confidence. Speaking out loud in a language you barely know is humiliating. That’s largely dissolved by talking into a computer. And, after some practice, being able to order a coffee fuels users with confidence.

A similar experience could be built for other notoriously embarrassing experiences like public speaking or job interviews. Put on a VR headset or Meta’s upcoming Orion glasses and see the audience or interviewer in front of you. Instead of practicing in the mirror, practice in front of the real thing until it becomes second nature.

BCIs for productivity

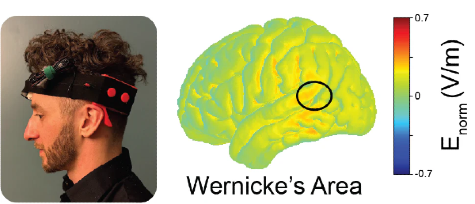

An early startup is making a headset that electrically stimulates the part of the brain most associated with language learning while the user interacts with the app. Though some academic work provides qualified scientific support for the premise, it’s still not yet proven in commercial settings how much this boosts learning and would likely have terrible adherence. But it’s both a fascinating early example of active modulation of healthy people for enhancement and a curious idea space to explore.

A second non-invasive BCI could identify a spiking EEG signal when your mind makes a connection with a piece of content indicating that you just learned something, just had an aha moment, just emotionally resonated with something. The product could, for instance, know what podcast you’re listening to and then automatically “clips” context around the spiking signal. It then adds that to its note-taking app that integrates with Notion or whatever other tools you use. It could use spaced repetition where you read back all the most important things that sparked an idea for you that week. Compound portfolio company Atlas has proven that such non-invasive mental state sensing BCI tech can be built.

Entirely New Business Models

Maybe even more creative business models than pay per subscriber or school-level contracts are possible with superior value capture.

Vertically integrated trades company that trains formerly low-skilled workers

Electricians, HVAC techs, plumbers, solar installers, etc. are among the most in demand jobs relative to their supply. It currently takes years of training to make a novice skilled enough. Create a learning system that teaches low-skilled workers in record time for a durable cost advantage in some of the hottest, most difficult-to-automate markets. Not just learning the vocabulary of the trade but actually getting them job-ready for billable hours by themselves.

When the workers are uncertain on site, they could troubleshoot remotely with their phone or even Ray-Ban Meta glasses. Maybe a new certification standard could be accepted by insurers and regulators where each job is autonomically logged and verified by AI.

Technological or product design advances could also simplify the product installation process itself.

Landing 14-Year Olds into SpaceX

A digital tutor where the end objective is your dream job. The system teaches you only what you need to know about that role, cutting out years from a normal degree or career change. For instance, to become a rocket engineer at SpaceX the curriculum wouldn’t include Spanish and it may not even need all of thermodynamics, but only the parts necessary for that role. That said, the platform would of course have to be robust against producing candidates with extremely brittle on the job performance like coding bootcamps.

Presumably the partner companies would like to see some form of that real-world performance. Typically, that’d be an internship. But, for those that can’t get that, this digital platform could create perfect video-realistic simulations of the jobs to be performed and the user does the actual job.

The platform would have direct vantage into who are the truly talented engineers. It’d then secure large-scale enterprise contracts with said organizations for funneling them quality talent.

Maybe a version of this could even be the next Thiel Fellowship or South Park Commons. Instead, the user pays you for a while and you get highly predictive data on their learning engine and outside-the-box thinking.

Are These Really Venture-Backable Ideas?

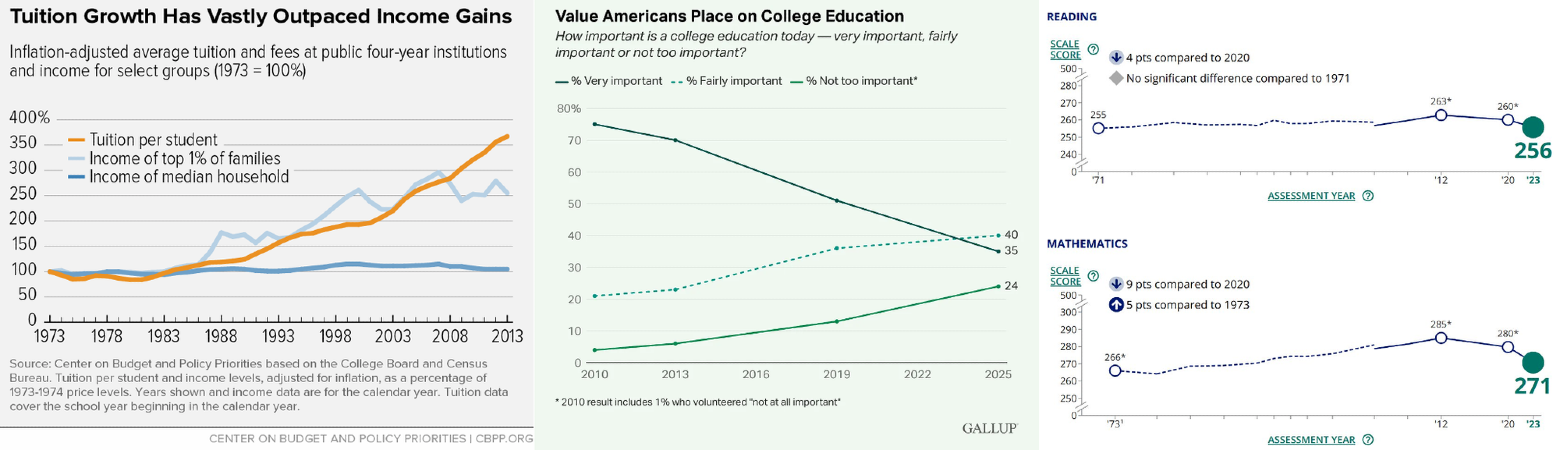

Now, you may say that these ideas are all fun and well but as Jerry Maguire said show me the money! There’s no doubt that to date, edtech’s economics have not penciled out.

In theory, the market for edtech is massive with largely untapped potential. In the US alone, the spend on K-12 is $950B, higher education is $700B, and tutoring is $25B.

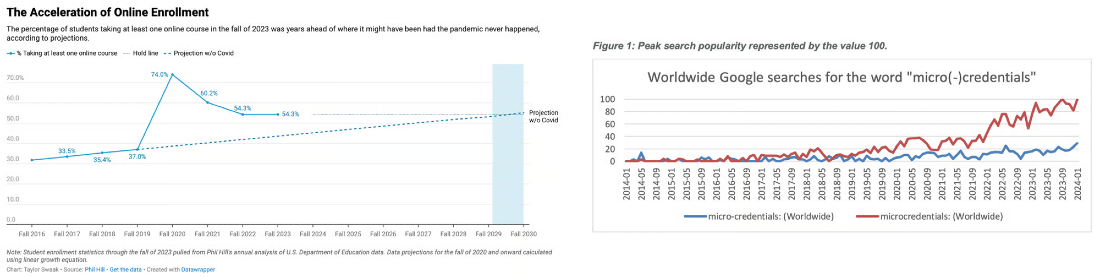

And, the underlying macro tailwinds remain in place.

But startups have only been able to touch a puny fraction of the total spending. Like other interpersonal service industries (e.g. healthcare) the bar for meaningful disruption is human-level cognition and interaction. As detailed in the sections above, we expect that gap to close in the next couple years and the realistically addressable market to grow gradually towards the theoretical TAM.

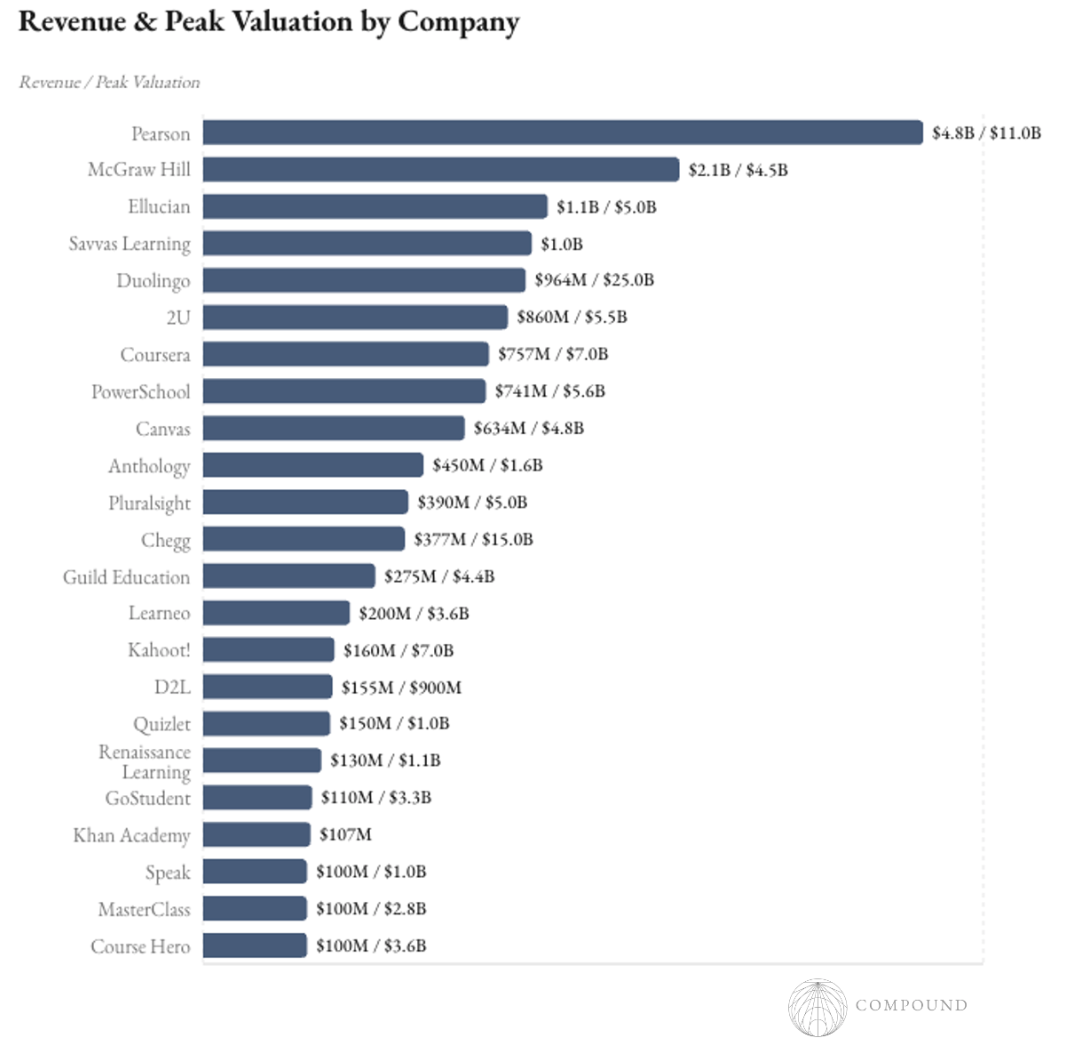

Even still, prior technological paradigms resulted in some venture-scale outcomes.

However, even for those prior generation companies that managed to reach scaled revenue, their economics often ain’t pretty.

They have a penny-pinching customer base, a fundamentally misaligned business model, the worst churn rates in SaaS, and high upfront content costs.

It’s not a coincidence that almost all edtech’s aren’t VC-funded but instead are non-profit, billionaire pet projects, or bootstrapped.

Business Model

Real substantive learning is uncomfortable, even painful. Selling pain to people is uphill sledding. Especially if the pain is optional.

So, all successful DTC edtechs reduce the pain of learning via mental shortcuts (Chegg, ChatGPT) and / or swap out substantive learning for dopamine-rich gamification (Duolingo). This great post from the Duolingo CPO details exactly how they cracked the dopamine circuit.

These apps all faithfully desecrate the core invariants to effective learning. Making the target skill the only route to success raises difficulty and drives up failure. Requiring mastery before moving on creates choke points and user frustration. Prioritizing active output over passive consumption increases cognitive load and shortens sessions.

Optimizing for DAU in the mass market ensures that learning is at best a lucky accident.

But the pressure for companies to take these short cuts is immense because the churn rate in DTC education apps is possibly the highest of any category out there.

DTC offerings must also show a return on investment within 7-14 days for the user to keep going, but that’s incongruous with the months-long learning process.

Alternatively, you don’t sell broccoli to children or people learning for fun. You sell it when they have no choice but to eat the broccoli. And preferably sell to those forcing them to eat it.

The contracts here can be $50-200K for K-12 districts and $500K-$10M for colleges. But sales cycles are like pulling teeth over several years, discretionary spend is under $30K, every state and even district does things differently so sales staff are large despite capped revenue, you're competing with Steve who's been selling to Mary for 15 years and takes her out golfing, and schools are currently cutting back on contracts after a COVID-driven splurge.

This results in a continuum.

1 Pearson, McGraw Hill, Ellucian, PowerSchool, Canvas, Pluralsight, D2l, and Coursera’s and Udemy’s enterprise NRR were all 95--105%. 3 MOOCs’ completion rates sit around 6%, The retention rates of Duolingo, Masterclass, and Chegg, are 9-40% in 2021 depending on monthly vs annual subscription, 48%, 7%, respectively.

Content Costs

Currently, creating a well designed course requires a huge upfront cost to pair with its uncertain utilization. Depending on the company, it takes a year with 2-20 engineer and subject matter expert employees for the digital tutor companies to add each new course.

MOOCs cost $40-300K per course built, making the industry structure concentrated in the organizations that built the biggest customer base with highest utilization to spread the fixed costs over. Masterclass is likely on the higher end given its unique positioning around celebrity partners and “beautiful and cinematic” production quality.

Meanwhile, marketplace business models like Coursera swap out the upfront cost by paying third party creators 20%+ of revenue.

On top of the upfront course creation sit ongoing operating and marketing expenses.

No wonder most of these organizations are non-profits, whether by choice or not!

The Shifting Economics

Large school system-level contracts will always be attractive for products that are truly essential to operations. But that ramp takes many years, and core to our thesis is that DTC economics may become meaningfully more attractive in the meantime.

Inferencing frontier models anew for each question would clearly blow edtech’s economics even more out of whack. That’s until either far more intelligent models than exist today get distilled into small models in say 4 years and / or frontier models are run locally to drive inference cost to that of electricity.

In the meantime, one could also easily cache frequently used questions, still route using a knowledge graph backbone, have deterministic-generative hybrid structures where only portions are generated on the fly, among other engineering tricks. Moreover,

If one can figure that out, what excites us is that for the first time such a startup may be venture-backable and a good business at scale.

- Churn may drop from an experience that’s both a superhuman tutor for substantive learning and some combination of engaging, fulfilling, and confidence-boosting.

- It may no longer take a new team ~5 years to get the first couple courses ready with a linear rollout of other courses thereafter. It may be faster to the first course and would certainly be a superlinear deployment of new courses thereafter. So, the timelines finally accord with the duration of a venture fund.

- A system that can generate any arbitrary lesson at superhuman level could plausibly become the go-to learning partner throughout the course of people’s lives from middle school to late career, learning their styles and preferences over time. That duration would spike LTV and the personalization would make it stickier. All edtech’s share this fantastical dream but hand-crafted lessons makes that too expensive and slow to ever achieve. This aspect of the thesis is similar to Compound portfolio company Wayve that pioneered the end-to-end deep learning approach within AVs in 2017 while Waymo was still writing driving rules by hand. It aims to be the first company to 100 cities

- The inference cost replaces what is already the expensive and slow process of curriculum creation to begin with.

- Returns to scale would come from continually improving the system’s understanding of how people learn and how to generate the most effective content.

Rapid Learning May Soon Become Existential

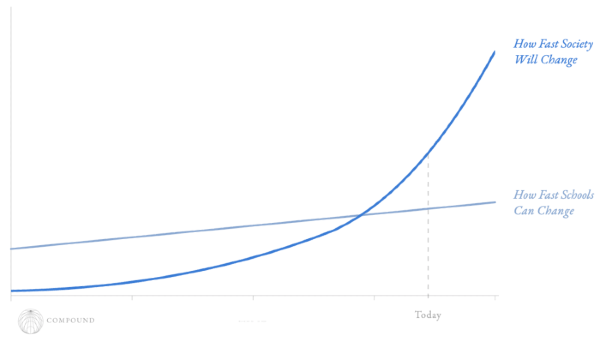

AGI’s arrival in the next few years will radically disrupt practically all jobs and eliminate many, possibly making rapidly learning new skills existential to a wide swath of the population. Traditional schooling has never been well equipped to change to society’s needs and is about to get way more out of touch with each year. Meanwhile, mid-career tutoring may become non-optional for those attempting an arms race with AI and those changing lanes entirely.

Maybe in the longer-term as human’s relative economic usefulness erodes, curiosity-driven learning will become a primary pastime to keep our minds sharp. If what we now do for work becomes what we do for fun, what kind of a learning and experiential environment would still give us purpose?

So You’re Telling Me There’s a Chance

All told, it’s conceivable to imagine a future where churn is low (at least for edtech’s), subscriptions last years or lifetimes pushing up LTV, returns to scale increase, time-to-deployment shrinks from years to real-time, all end markets are the first time addressable by one company, and that end market suddenly expands with many consumers feeling an existential need for the product.

Not saying that’s our base case. These ideas remain borderline uninvestable, especially given that ChatGPT is the front-door to consumer engagement, Google has already shown a proclivity to give away education products like Classroom for free and has built NotebookLM into a multi-hundred person team, and in maybe 12-18 months a 80-90% polished product could be one-shotted by Claude. These forces may forever pinch unit economics from both ends.

But if a company starts today (six months from now is possibly even too late), it may have just enough time to build a product that users truly love, trust, and build the social aspects of learning around. From there, hope that the data from running experiments on user’s learning process improves the product’s learning efficiency and effectiveness fast enough to maintain a durable edge and that the frontier labs continue to largely ignore education in pursuit of greener pastures.

Maybe, like back in the day when Facebook’s professional network lost to startup LinkedIn because users wanted to separate their professional and social lives, there will even be some rebellion from consumers to psychologically separate the companies that disrupt their jobs from their intent to improve themselves and seek new jobs. Anthropic vibes but only here to help you.

Given that it’s the only vertical where no category-defining AI startups exist, it seems worth trying.

The optimistic vision is easy to day dream about. Every child or adult receives world class, infinitely patient, truly engaging instruction calibrated precisely to their needs. They learn more efficiently, at their own pace, systematically. Teachers can focus on what only humans can genuinely do: motivation, relationship-building, compassion, the human dimensions of learning.